News

- January, 2023 : Final journal version available.

- May, 2021 : Presentation video uploaded.

- May, 2021 : Paper was presented as an invited presentation at I3D 2022.

- December, 2021 : Web launched.

- December, 2021 : Paper accepted at TVCG.

Abstract

We present a deep learning-based method for propagating spatially-varying visual material attributes (e.g. texture maps or image stylizations) to larger samples of the same or similar materials. For training, we leverage images of the material taken under multiple illuminations and a dedicated data augmentation policy, making the transfer robust to novel illumination conditions and affine deformations. Our model relies on a supervised image-to-image translation framework and is agnostic to the transferred domain; we showcase a semantic segmentation, a normal map, and a stylization. Following an image analogies approach, the method only requires the training data to contain the same visual structures as the input guidance. Our approach works at interactive rates, making it suitable for material edit applications. We thoroughly evaluate our learning methodology in a controlled setup providing quantitative measures of performance. Last, we demonstrate that training the model on a single material is enough to generalize to materials of the same type without the need for massive datasets.

Presentation

-

We presented this paper at I3D 2022. Please use this link to see the presentation and discussion. Raw 4K presentation is included below:

Resources

- Preprint High-Resolution [PDF, 93.8 MB]

- Preprint Low-Resolution [PDF, 23.0 MB]

- Supplementary material [PDF, 31.4 MB]

- Journal link (TVCG)

- Arxiv Link

Results

-

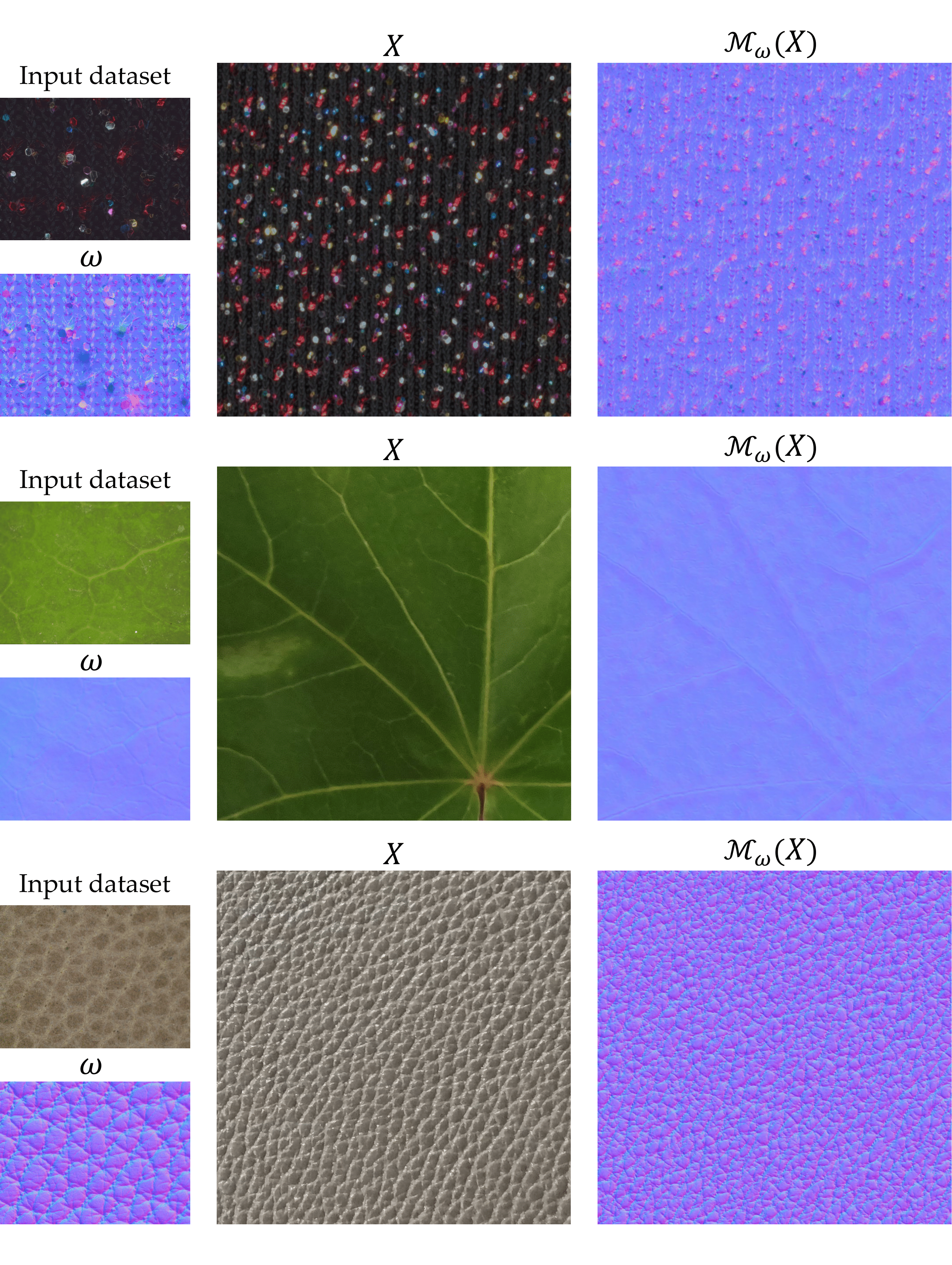

To train our models, we leverage a photometric dataset, which is composed of several images of a material, captured under different illumination conditions:

-

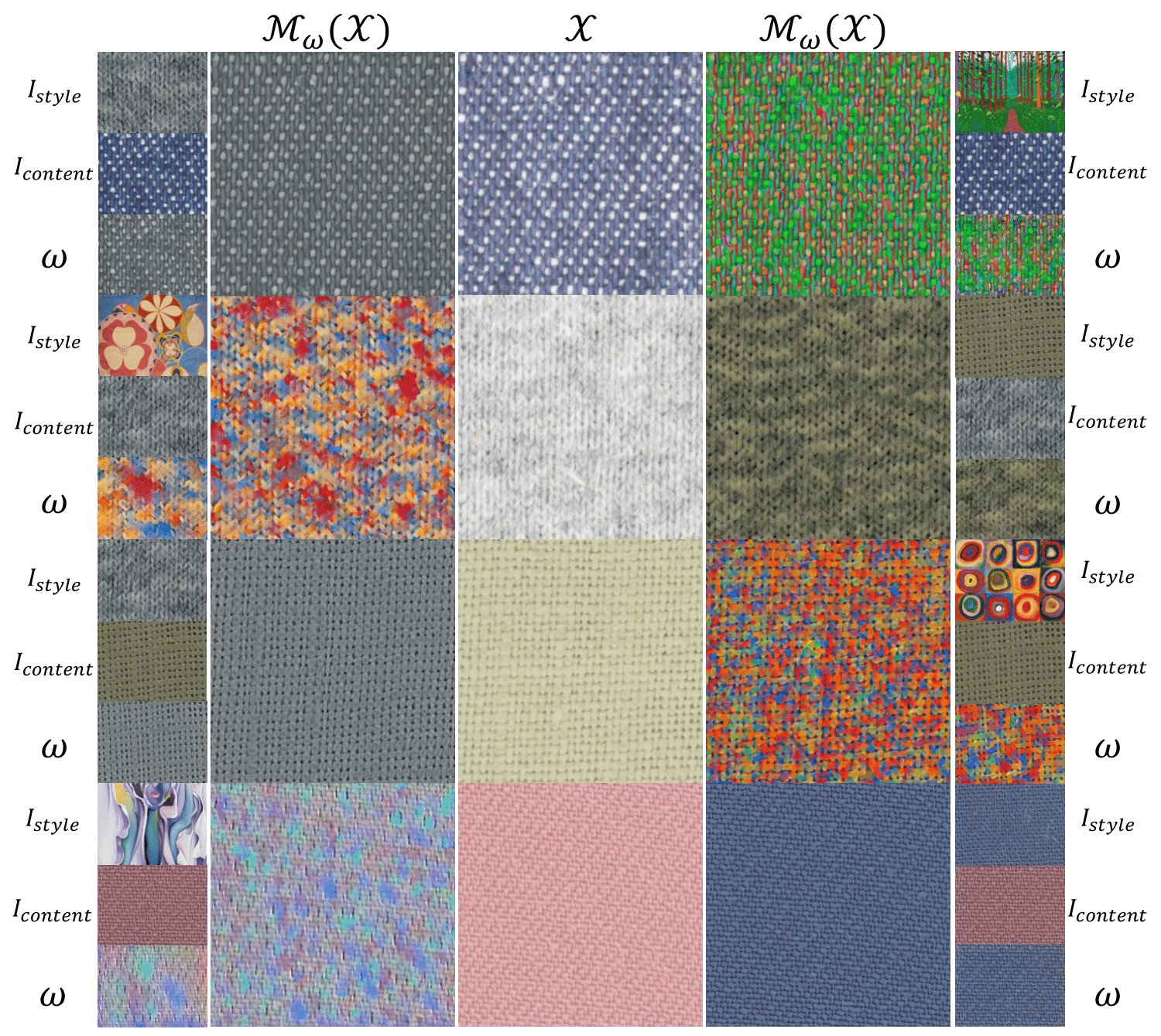

Using this photometric dataset, we can learn mappings between images of the material and corresponding visual attributes, which may include SVBRDF maps, semantic segmentation maps or image stylizations.

-

This mapping is learned by a shallow U-Net fully-convolutional network, which can be fully trained in less than 1 minute and can be evaluated in milliseconds, allowing for a novel illumination-invariant interactive material edit framework. We name this model photometricNet.

-

Our method works with a diverse set of materials, including fabrics, leathers, wood, leaves, etc. These guidance images can be captured with a smartphone and be subject to affine geometric distortions, which can be handled by our models due to a dedicated data augmentation policy.

-

PhotometricNet can also be used to predicatably propagate image stylizations, which can be generated manually by artists or by state-of-the-art neural style transfer algorithms.

-

PhotometricNet provides real-time estimations, which are both shift-equivariant and illumination-invariant:

Bibtex

Acknowledgements

Elena Garces was partially supported by a Torres Quevedo Fellowship (PTQ2018-009868). We thank Jorge López-Moreno for his feedback and David Pascual for his help with the creation of property maps.